Sony has been implementing Hybrid Log Gamma across a number of their cameras over the past few years. In their infinite wisdom, they also decided to hack the HLG specification in order to produce the anomalies that are HLG1, HLG2 and HLG3. In this write-up we will cover off Hybrid Log Gamma on Sony cameras – warts and all.

Let’s start off by highlighting that we have a few problems with Sony cameras. I am by no means anti-Sony – I personally own and use the RX0 II as a rugged helmet mounted camera when out fighting wildfires (I volunteer as a Firefighter). Despite the lack of a HLG picture profile, it does an amazing job under extreme conditions due to an aluminum body acting as a heat spreader to disperse internal heat. With that said, I am an Engineer and it’s hard not to ignore the glaring issues on Sony cameras.

Yes the User Interface leaves much to be desired and the camera firmware can be buggy, but that’s not what we’re talking about in this write-up. We’re here to focus on Hybrid Log Gamma in relation to Sony cameras.

8 Bits of Glory

Until the recent advent of the A7S III and subsequent cameras, Sony has been implementing 8-bit 4:2:0 Internal and 8-bit 4:2:2 External recording across their hybrid camera ranges – even for HLG! The Hybrid Log Gamma system was conceived from the onset as a broadcast format of the future with a minimum of 10-bit encoding in mind. It was specifically geared for the High Dynamic Range (HDR) age and the paltry 8-bit encoding on offer by Sony simply doesn’t cut the mustard. Worse yet, this is 8-bit 4:2:0 chroma subsampling – meaning even less data crammed into an inefficient XAVC codec that already suffers from red hue shift issues.

An 8-bit signal only offers 256 levels of gradation (0 – 255) whereas a 10-bit signal offers 4 times as much at 1024 levels (0 – 1023). HLG is also encoded using Legal ranges, meaning that an 8-bit RGB signal actually only uses 219 levels of gradation from 16-235. With chroma subsampling thrown into the mix, 8-bit is a horrible proposition for any recording format, let alone an HDR format!

We should at least give Sony credit for implementing HLG though, as it is far superior to the S-Log implementations in many ways. One major aspect of this superiority is that the Hybrid Log Gamma implementation allows for maximum dynamic range from a sensor (S-Log3 for example is hobbled at 1300% of an SDR signal). Another major advantage is the sensor gamut coverage of the Rec.2020 color space compared to the larger S-Gamut color space, though I aim to cover the HLG vs. LOG profiles comparison in extensive detail for another write-up.

The HLG drama

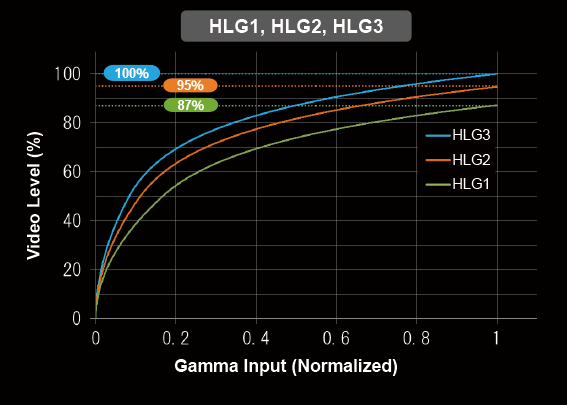

Sony have quoted the following in their documentation with regards to the custom HLG implementations:

[HLG1], [HLG2], and [HLG3] all apply a gamma curve with the same characteristics, but each offers a different balance between dynamic range and noise reduction. Each has a different maximum video output level, as follows: [HLG1]: approx. 87%, [HLG2]: approx. 95%, [HLG3]: approx. 100%.

Source: Sony Online documentation

Sony also provide a visualization of the respective custom HLG curves.

One problem with this visualization is the fact that Sony is showing a Gamma input that has been normalized to the 0 – 1 range. An 18% Middle Gray calculation needs to factor this normalization in when obtaining the correct exposure values. Unfortunately, Sony doesn’t elaborate on the correct IRE values for Middle Gray exposures. Their in-camera exposure metering also appears to be calculated using the ITU BT.709 curve – not using the true HLG curves. As a result, the average user becomes “undone” by misinformation on the internet and across mass consumption platforms such as YouTube where content creators also receive endorsements, sponsorships and freebies from none other than Sony themselves. For posterity, the MATHEMATICALLY CORRECT 18% Middle Gray values for Sony’s HLG implementations are listed below:

| HLG Type | IRE Value | |

|---|---|---|

| Sony HLG 1 | 19% IRE | |

| Sony HLG 2 | 20% IRE | |

| Sony HLG 3 | 21% IRE |

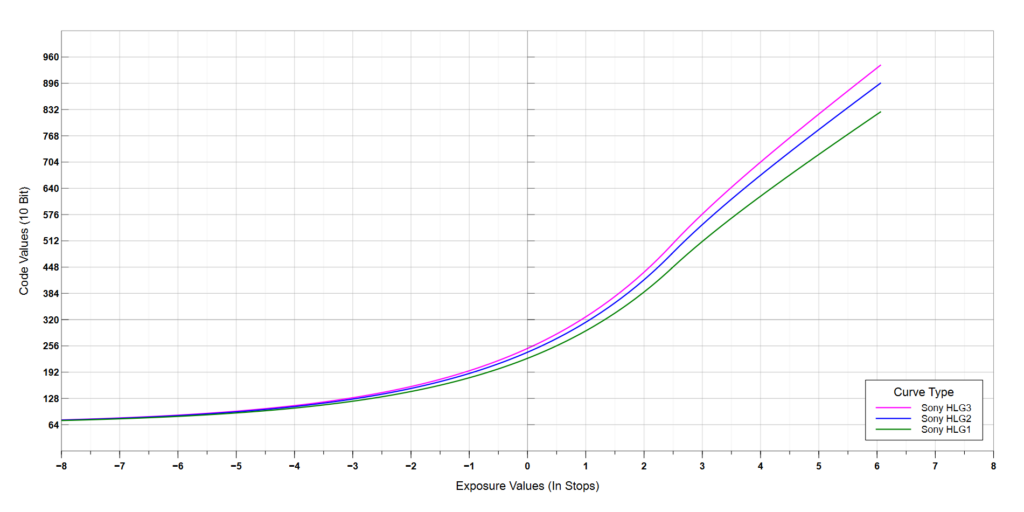

When we expand the Sony HLG equations on a characteristic curve with reference to Middle Gray, we can see the resulting picture and issues far more clearly.

Firstly, Sony’s HLG3 profile is almost the same as the HLG BT.2100 standard, but has slightly lifted shadows and from preliminary analysis seems to exhibit less sporous encoding issues that often manifest as red chroma noise/splotches across a scene. Lets revisit the technical commentary by Sony around reduced noise vs less dynamic range. What Sony have wrongly done is to conflate Dynamic Range with Coding Values. As is clearly visible from the Characteristic Curves above, HLG1 and HLG2 reach a peak at lower overall coding values. If we narrow these signals down to 8-bit as present on most Sony cameras, we then begin to see less tonal definition and more banding across the image. For a regular HLG BT.2100 & HLG3 curve on an 8-bit camera, there are theoretically 219 steps of gradation from pitch black to absolute white. This is further reduced to 208 steps of gradation for HLG2 and the lame duck HLG1 profile does by with a paltry 191 steps of gradation! Essentially, there are less bits being utilized in the encoding, thereby artificially reducing the overall dynamic range due to increased banding. Simply put, HLG2 is slightly worse than HLG3 and HLG1 is just the worst profile of the HLG lot. The main inhibitor here is the inefficient use of the 8-bit signal in coordination with the ugly red chroma splotches thanks to the 4:2:0 H.264 XAVC encoding.

In terms of noise reduction enhancements, the HLG1 and HLG2 picture profiles use a slightly lower signal amplification compared to HLG3 & HLG BT.2100. On the A7III for example, HLG1/HLG2 use a base-ISO of 100, whereas the HLG3/BT.2100 profiles use a base ISO of 125. Any difference in signal amplification between the various HLG profiles is largely negligible and by extension, any possible noise variances are also largely negligible, if exposed correctly. When scenes are incorrectly exposed however, this is a completely different story.

Metering Exposed

Unfortunately, the exposure metering for HLG profiles on Sony cameras seems to align with the ITU BT.709 curve rather than a true HLG curve, as mentioned earlier. The end result of this behavior is that users may inadvertently overexpose their footage at 41 IRE across the HLG curves rather than at the true native middle gray values. The consequence of this incorrect exposure is a perceived reduction of dynamic range due to exposing to the right (ETTR). In reality, the dynamic range for each of those respective HLG profiles is still the same, however there are now more bits allocated to the mid-tones and shadows. If we expose these profiles at 49 – 50 IRE as promoted by some ill-informed YouTubers, this exposes the scene even further to the right, effectively reducing the available highlight range above middle gray to 3.5 stops for HLG3 down to 3 stops for HLG1 – only half a stop more highlight range than a standard Rec.709 curve!

In order to expose for HLG on a Sony camera, the exposure meter should not be relied on at all. Instead, the Std+Range Zebra patterns should be used and set based on the respective middle gray values noted earlier. Refer to the Recording and Editing HLG Part 1 post for more details.

The International Telecommunication Union (ITU) has also published BT.2408 which recommends that for best production practices, Middle Gray should be exposed at 38% IRE in the case of HLG. This is sometimes also referred to as HLG 75%, where SDR peak white (an input of 1.0) outputs an HLG signal of 75% (compared to 50% for the HLG BT.2100 standard). By having HLG exposed at 38% IRE, the output will be somewhat “backwards compatible” when viewed on SDR screens – although the color gamut will be completely wrong, the initial portion of the HLG gamma curve will be somewhat close enough to the ITU-R BT.709 curve that a video won’t appear entirely flat like with other Log gammas.

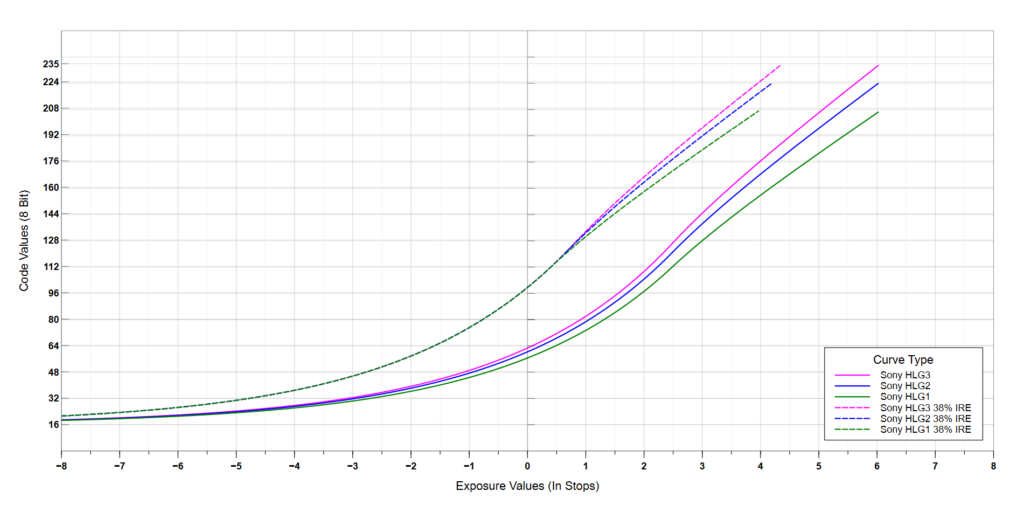

Shown below is an 8-bit characteristic curve comparing HLG1, HLG2 and HLG3 against the HLG curves exposed to the right at 38% IRE. As is clearly visible when exposed at 38% IRE, the HLG1 profile offers the lowest range in the highlights, but because it is exposed furthest to the right, it would also exhibit the lowest level of visible noise, despite the inefficient use of bits. The same level of noise reduction can also be achieved by exposing approximately 2+ stops to the right for the other Sony HLG profiles which additionally provide more steps of gradation. Better yet, avoid HLG1 & HLG2 like the plague and stick to HLG/HLG3 exposed at 38% IRE (37.8% to be exact) if SDR delivery is the intent.

Decoding Sony HLG

So far, we have only covered the encoding of Sony HLG profiles, but we must also cover the ingestion and normalization (color correction) of this footage. Whilst the HLG3 profile is practically the same as HLG BT.2100 (which means that a standard HLG BT.2100 profile can be used for footage normalization), the HLG2 and HLG1 profiles are not standard as they’re limited to 95% and 87% of the full signal level respectively. When these HLG profiles are ingested and normalized based on the HLG BT.2100 specification, the footage is incorrectly normalized at lower levels resulting in an overall darkened scene – the gamma curves are simply not the same.

For footage exposed at 38% IRE based on the BT.2408 recommendation, normalizing based on the HLG BT.2100 specification will result in extremely blown out clips. Additionally, if any custom HLG profile is recorded using Rec.709 color primaries, these cannot be normalized using a standard HLG BT.2100 profile which is based on the Rec.2020 color primaries. In essence, these HLG profiles require normalization curves that are specific to their profile characteristics, both for the gamma and the color gamut.

At the time of publishing this article, outside of the HLG Normalized Plugin, the HLG Normalized transform and the HLG Normalized LUT Pack, no other solutions exist to normalize these custom HLG profiles – not even from Sony. The HLG Normalized Plugin works on Video Editing and Visual Effects applications that support the OpenFX standard such as Assimilate Scratch, DaVinci Resolve, Nuke and Fusion. The HLG Normalized Transform works in DaVinci Resolve and specifically includes Input Device Transforms (IDTs) for ACES workflows. The HLG Normalized LUT Pack can be used on external monitors & recorders or across video editing applications such as Adobe Premiere and Final Cut Pro where the HLG Normalized Plugin is not supported. These solutions will normalize HLG content by transforming it to the desired format, whether Rec.709, ACES or other Color Gamuts and Gammas.

Conclusion

Despite Sony’s claims to balance Dynamic Range against Noise Reduction, we have broken down the technical details of these custom profiles and hopefully clarified any misconceptions. The HLG1 profile is worse than the HLG2 profile which is worse than the HLG3 profile. The HLG1 profile specifically peaks at 85% of a full signal level, using only a theoretical 191 steps of gradation compared to the 219 steps for HLG BT.2100 with an 8-bit encoding. The HLG2 profile peaks at 97% of a full signal level, theoretically using only 208 steps of gradation for an 8-bit encoding. The HLG1 and HLG2 profiles are therefore more susceptible to visible banding as a result of inefficient bit usage. Only when over-exposing a scene do these profiles offer tangible noise reductions, but this is simply a placebo due to the result of exposing to the right (ETTR) – it’s an over-compensation due to their lower native middle gray IRE values. The same level of noise reduction can be achieved by over-exposing HLG3 / HLG BT.2100 profiles, but with greater steps of gradation compared to HLG2 and HLG1. For example, HLG3 and HLG BT.2100 can be exposed at 38% IRE and normalized using the HLG BT.2408 preset on the HLG Normalized Plugin, Transform or LUT Pack. The differentiation between the HLG profiles may not seem like much, but 11 and 28 additional steps of gradation for an 8-bit signal certainly go a long way.

In summary, stick to HLG3 or the standard HLG profile on Sony cameras and expose at 38% IRE if your main focus is SDR delivery. The other custom HLG profiles are simply inefficient and far too niche for any useful purpose. Better yet, use a camera that records HLG properly with 10-bits of information.

-

HLG Normalized LUT Pack$29.50 AUD

HLG Normalized LUT Pack$29.50 AUD -

HLG Normalized OFX Plugin$38.00 AUD

HLG Normalized OFX Plugin$38.00 AUD -

HLG Normalized Transform$29.50 AUD

HLG Normalized Transform$29.50 AUD

Hello,

I wanted to express my appreciation for your three well-written posts related to Sony’s HLG picture profiles. Your posts provided valuable information about the benefits of using HLG (or HLG3) profiles over HLG1 and HLG2 profiles, particularly when using an ‘8-bit, 4-2-0’ camera. Thank you for sharing this insight.

Additionally, I gathered that, despite not being explicitly stated, footage shot with the Rec. 709 picture profile may have better quality than footage shot with any HLG profiles. Could you confirm if this is the case?

I have a question about noise reduction. Your post recommends over-exposing HLG3/HLG BT.2100. I am curious if you have a recommendation for HLG BT.2020, and if so, how much over-exposure is recommended.

As for my work, I shoot underwater videos using HLG2 picture profile, BT2020 (XAVC QFHD file format, 2160/30P, 100MBPS) with a Sony PXW-Z90 camcorder. I have noticed that I experience “red hue shift issues” and “red chroma noise/splotches across a scene” when the exposure and/or white balance are incorrect. I rely on the exposure meter when filming underwater, but I understand that the Range Zebra patterns could also be useful in theory.

Lastly, I noticed that your Plugins and LUT Packs for FCPX are for converting HLG to Rec.709, rather than just improving HLG footage. Is this correct?

Thank you for your time and expertise. If you are interested in seeing an example of my work, I would be happy to share a link to one of my ‘underwater’ videos shot in HLG2.

Best regards,

Val

Hi Val,

Rec.709 does not have better quality footage per-se. Whether Rec.709 or HLG is better for your needs is dependent on numerous factors such as lighting requirements, dynamic range of the scene, picture profiles being used and ultimately your delivery format. Rec.709 is not always Rec.709 on cameras as different picture profiles use different gamma curves from the ITU-R BT.709 standard. If there is no additional dynamic range required because highlights are not clipping and you are only delivering to Rec.709 Gamut SDR, then what benefit would HLG provide? It’s something you will need to ask yourself. However, if you would like to deliver beyond Rec.709 SDR by using a larger color gamut or a wider dynamic range, then HLG would best suit your needs.

In terms of noise reduction, I believe you are talking about HLG BT.2100… If noise is visibly an issue then I would recommend over-exposing by either one stop (30% IRE), or following the HLG BT.2408 recommendation and expose at 38% IRE. Do keep in mind that this should not come at the expense of having to crank up the ISO as that would be counterproductive.

I would recommend you avoid the exposure meter and get comfortable with the range based zebra pattern instead. On the current crop of Sony cameras, this is THE BEST method of getting accurate exposures.

The Red hue shift and red splotching issues are largely a symptom of poor XAVC encoding of 8-bit chroma subsampled content. There is a marked increase in quality if you record to an external recorder with a higher bit-rate format – even if it is still 8-bit source output from the camera. Chroma Noise is inherent to the sensor and is especially visible with under-exposed footage. You may find better results with over-exposing in this scenario (again, without cranking up the ISO).

The Plugins and LUT Pack all apply corrective transfer functions and color space transforms to normalize the HLG footage, whether that be Sony HLG, or the standard HLG profiles. The current version of the LUT Pack focuses on SDR delivery and includes LUTs that will also perform tone and gamut mapping to bring the HLG HDR content back to SDR. The Plugins are meant for more advanced color workflows and include support for exposure adjustments, numerous color profiles and workflows such as ACES. There is nothing to “improve” for HLG as it is THE BEST video profile currently available on cameras and many manufacturers are including it by default which makes camera matching so much easier.